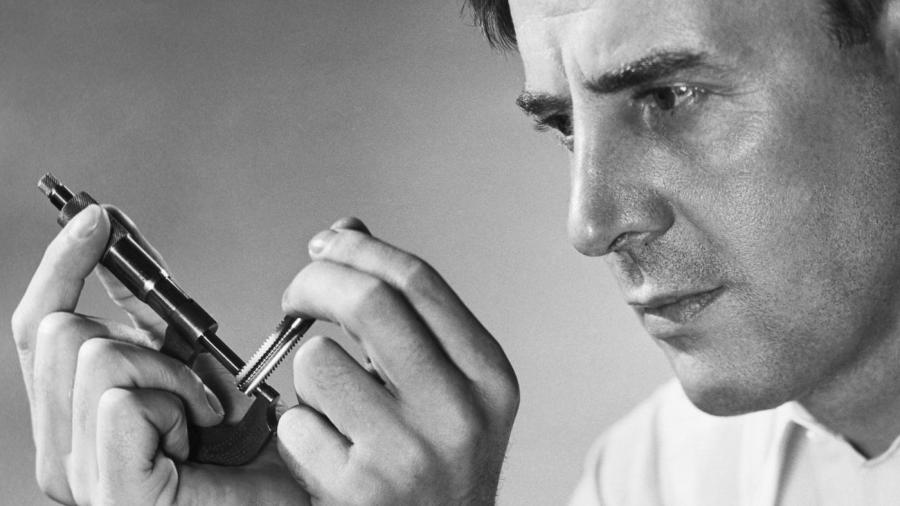

What Is Zero Error on a Micrometer Screw Gauge?

The zero error of a micrometer screw gauge occurs when the flat end of the screw touches the stud or anvil, and the gauge reads other than zero. If there is an error, it results in a positive or negative calculation. However, well-calibrated micrometers that are in good condition measure close to zero; and, they do not introduce a noticeable error.

Machinists test the accuracy of micrometers using gauge blocks or rods of a precise thickness. If they discover an error, they re-calibrate the device. They cancel zero error by causing the micrometer to read zero when its jaws close. Proper calibration requires careful attention and cleanliness of the instrument and standard. Most micrometers include a design that allows a pin spanner to turn the barrel in relation to the frame, and this adjusts the zero line.

In commercial shops, contracts often dictate that the business calibrate micrometers annually. Even with this amount of caution, machinists should check for zero error using standards every two to three months. In most cases, such checks reveal no errors.

Standard one-inch micrometers have readout divisions of 0.001 inch with an accuracy of 0.0001 inch. To ensure accuracy, both the gauge and material being measured must be kept at room temperature. Dirt, abuse and operator mistakes introduce the greatest amount of error into the use of micrometers.